Reflection Post #6 – AI

Generative AI has often been framed as a black box that either spits out magic or misinformation. But as Dr. Normand Roy from the University of Montreal explained, the secret to making AI a truly effective partner in education isn’t just about the tool you choose, it’s about the context you provide.

From RAG to Deep Research, Dr. Roy broke down the professional workflows that turn AI from a toy into a powerful research assistant.

What is RAG?

The most important acronym to learn in AI today is RAG (Retrieval-Augmented Generation).

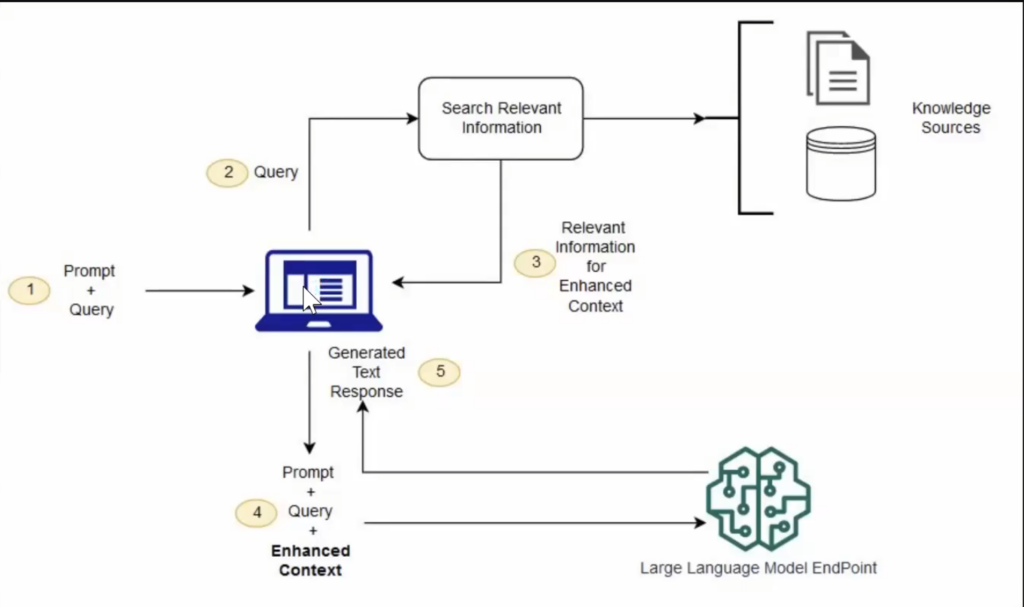

Standard AI (like the free version of ChatGPT) relies on its pre-trained memory. If it doesn’t know the answer, it might make something up. RAG changes the math. Instead of asking the AI to remember everything, you give it a specific knowledge source, like a textbook, a research paper, or a syllabus.

- How it works: You upload a PDF or point the AI to a specific folder. The AI is then instructed to look at your documents first before answering.

- Why it matters: It drastically reduces misinformation. If you’re a biology student, you can upload your specific OER (Open Educational Resource) and ask the AI to quiz you only on that content.

Chain of Thought for an AI

Modern AI models now use a chain of thought process. Instead of jumping straight to an answer, the AI follows a sequence:

- Search: Look at the uploaded documents.

- Web Search: Find supporting data from the live internet.

- Synthesize: Combine the two to build a final response.

Dr. Roy demonstrated this by toggling the thought for an LLM, you can see exactly which websites the AI visited and which parts of your document it cited.

Deep Research: High Power, High Cost

A brand-new feature in tools like Gemini and ChatGPT is Deep Research. Unlike a standard search that looks at a few links, Deep Research can scan hundreds of websites, shred the data into pieces, and build a comprehensive report.

The Catch: The Environmental Price Tag;Deep Research is computationally expensive.

- The Water Cost: Dr. Roy said some estimates suggest that every 50 ChatGPT requests cost about half a liter of water used to cool data centers.

- The Rule of Thumb: If you just need to know the height of the Eiffel Tower, use Wikipedia or a standard search engine. Only use Deep Research for complex, multi-layered problems.

Summary

Ultimately, by utilizing professional workflows like RAG and Deep Research, we move beyond the risk of misinformation and toward a model of Universal Design where technology adapts to our specific needs rather than the other way around. However, this power comes with a new responsibility to balance our informational needs with the environmental costs of high-compute tasks. As we integrate these tools into our academic and professional lives, the goal remains to not treat AI like a perfect machine that has all the answers. Instead, think of it as a powerful digital workbench, a tool that only works well if we keep a close eye on it and feed it the right information ourselves.